Designing a SaaS platform for clinical trial data management

SaaS Platform • End-to-End Design • Workflow & Data Systems • QA & Iteration • Sole Designer

In this project, I was responsible for the end-to-end design of an EDC (Electronic Data Capture) SaaS platform for managing clinical trial data. The scope included study setup, data collection, and ongoing trial operations, ensuring the clinical data is accurate, structured, and accessible throughout the lifecyle of a study.

I designed and refined all product flows, expanding the platform to support features such as dashboards, complex questionnaire logic, data validation, workflow management, and AI-assisted documentation. I worked in close collaboration with stakeholders, product owners, and developers, specially during implementation and QA.

KEY CHALLENGES

High Data Density & Cognitive Load

Managing trial data involves large volumes of structured information, conditional logic, and multi-step workflows. The challenge was creating interfaces that remained clear and usable without being overwhelming.

Requirements to Product Decisions

Many requirements originated from high-level stakeholder input and needed to be interpreted and transformed into concrete, implementable design solutions

The platform needed to support complex behaviors such as questionnaire logic, validation rules, and workflow dependencies, while maintaining a consistent and intuitive user experience.

Usability vs System Complexity

DESIGN DECISIONS

FOUNDATION

1. Study Initialization

2. Study Language Definition

3. Roles & Permissions

(enables parallel work and prevents unauthorized edits)

STRUCTURE

4. Define Study Location

5. Define Cohorts / Arms

SCHEDULING

6. Visit & Event Schedule

7. Create and Assign Forms to Visits & Events

LOGIC & CONFIGURATION

8. Edit Checks & Conditional Logic

(depends on fields, forms, and visits)

9. Randomization Configuration

(depends on eligibility, visits, and roles)

FINALIZATION

10. Translations

(defined after content is stable)

11. Validation & Preview

12. Study Freeze, Versioning & Approval

Data Density and Clarity

Information in clinical trials is dense, highly structured, and handled by multiple roles over long periods of time. This required designing interfaces that could be easily understood, revisited, and handed off between users without losing context.

To support this, information was progressively disclosed and organized into structured sections, with visual hierarchy and spacing guiding attention. This made complex forms easier to navigate and improved efficiency when inputting and reviewing data.

Simplified representation of the study set-up workflow.

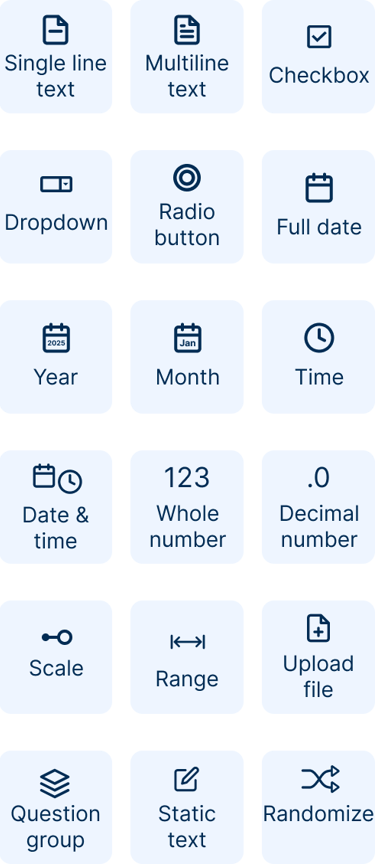

Complexity and Flexibility

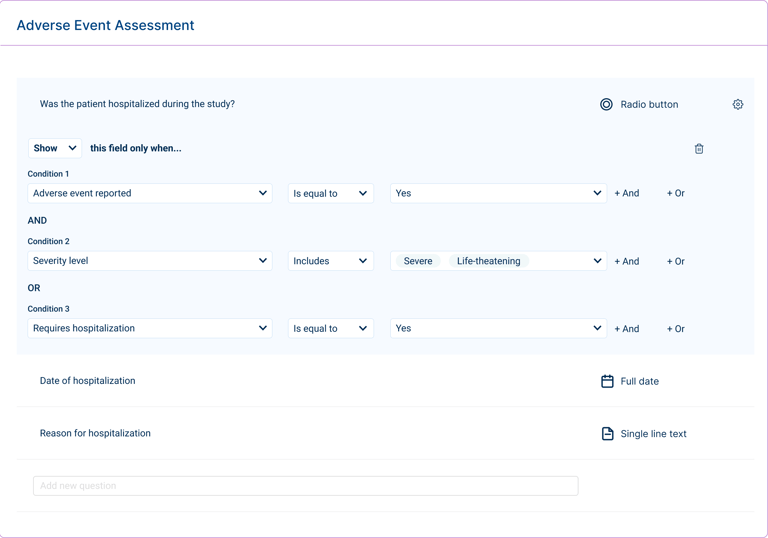

Clinical trial configuration involves complex logic, dependencies between fields and visits, and evolving study requirements. While users are familiar with these concepts, the challenge lies in translating them into a system where they can be configured clearly and consistently.

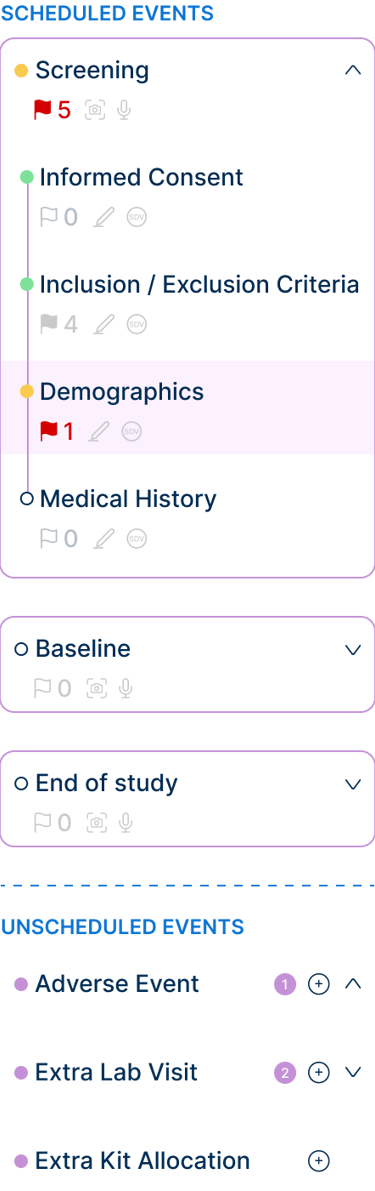

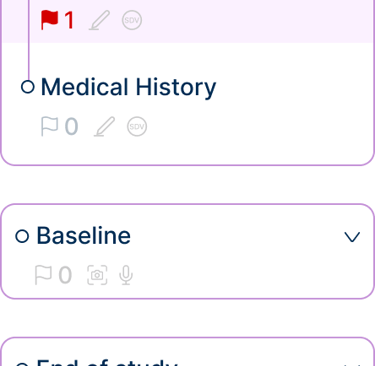

To address this, logic was structured into simple, predictable interactions that make conditions and relationships easier to define. The system also supports flexible study configurations, including dynamic forms, unscheduled events, and evolving setups, without overwhelming users.

Condition builder for defining questionnaire logic and field dependencies.

Workflow Structure and Dependencies

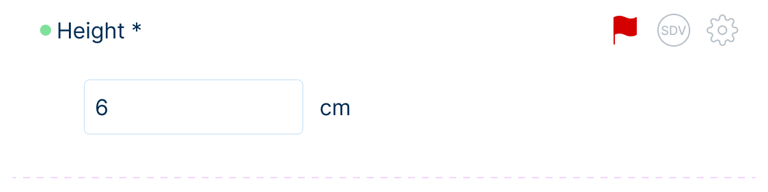

Selected interface fragments illustrating data entry, navigation, and configuration.

Designing for clinical trials required structuring workflows that span multiple stages, dependencies, and user roles. Actions such as defining study structure, configuring logic, and scheduling events are interdependent, meaning that decisions made early in the process directly impact later steps.

To address this, the system was organized into a clear, step-by-step workflow, guiding users through the study setup process while respecting these dependencies. Each stage builds on the previous one, reducing errors and ensuring that configurations are completed in a logical order.

ITERATION & REFINEMENT

Some decisions were adapted to align with technical constraints and priorities, requiring simplification without compromising usability. I was also involved in QA, testing features, validating reported issues, and resolving design-related bugs to ensure alignment between intended and actual behavior.

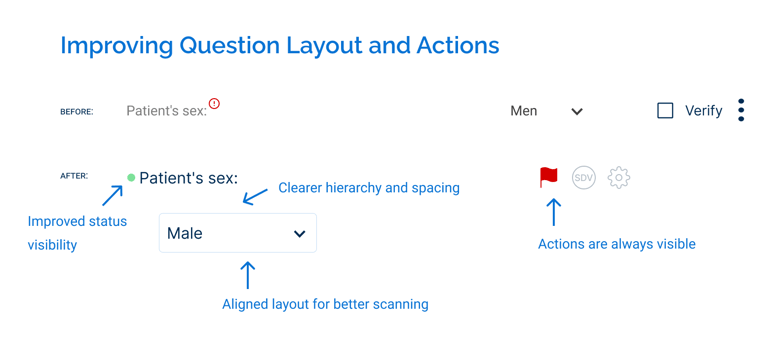

As part of the redesign, existing components were reworked to improve usability. For example, the question component was redesigned to make key information and actions more visible, replacing hidden interactions with persistent elements and improving layout consistency.

Following implementation, the design was further refined based on developer and user feedback. For instance, actions that were previously hidden on hover were made persistently visible after users had difficulty discovering them.

Additional refinements to interactions, tooltips, and element placement helped improve clarity and usability over time while maintaining consistency across the system.

OUTCOMES & REFLECTIONS

Designing for systems, not screens, requires thinking beyond individual interfaces, focusing on workflows, dependencies, and how information flows across the product.

Translating familiar domain concepts into a product experience requires clear guidance, as users may understand the subject matter but still need support in configuring it within the system.

Balancing complexity and usability is essential in data-heavy systems, ensuring the experience remains intuitive without limiting flexibility.